Assignment Builder

Ahead of back-to-school, Newsela rolled out new features in a split A/B test to give teachers more control when creating assignments.

Working with Product Managers and a Data Analyst, I led user interviews to assess the value of these new features. My insights shed light onto what (and why) certain features were performing well, helping our team prioritize what to refine and deploy in a full-scale release.

Project Type: UX Research

Role: Sr. UX Researcher

Organization: Newsela

Timeline: 2 months

Team Size: 4 members

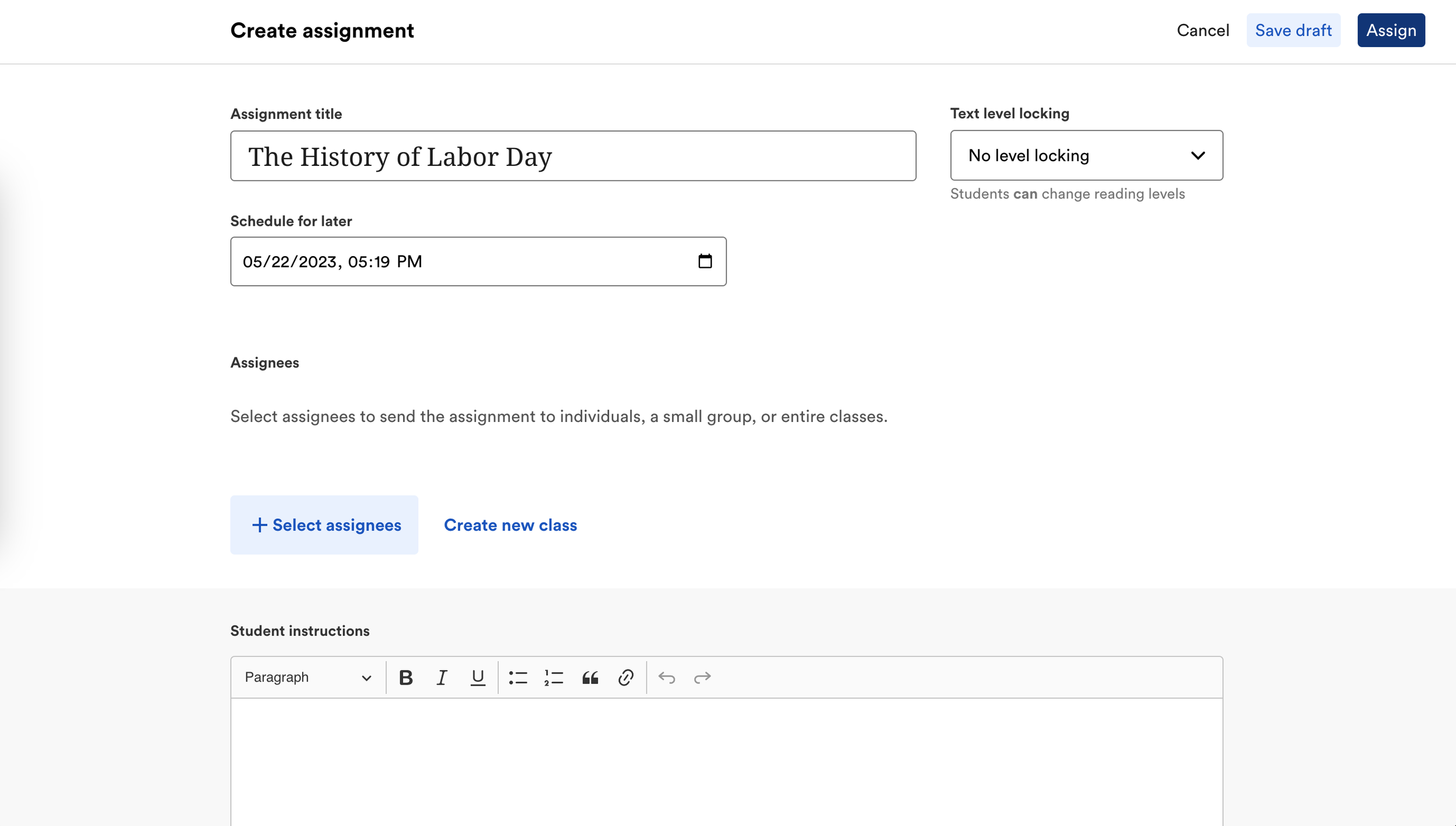

The control group (80% of users) saw the current assignment builder

The experiment group (20% of users) saw the new assignment builder

[ Background ]

From past user research, data, and customer feedback, we knew that teachers were missing key assignment features and were using off-platform workarounds to meet their needs.

So, the product team prototyped a set of new features (e.g. ability to lock reading levels, set start/due dates, assign specific activities).

We wanted to see which features brought the most value to users, and consequently, the most value to the company.

[ Process ]

In building the test plan, I defined the test objective, research questions, participants, methods, and schedule.

Test Plan Details

Test Objective: Assess the value of new features in the assignment flow. Insights will help inform what we ultimately refine and deploy.

Research Questions:

What features are working well? Why are the valuable?

What features are not working as well? How can they be better?

What other features would help teachers in their workflow?

Participants: 15 teacher users in experiment group

Methods: Moderated teacher user interviews, A/B testing

An Agile Process

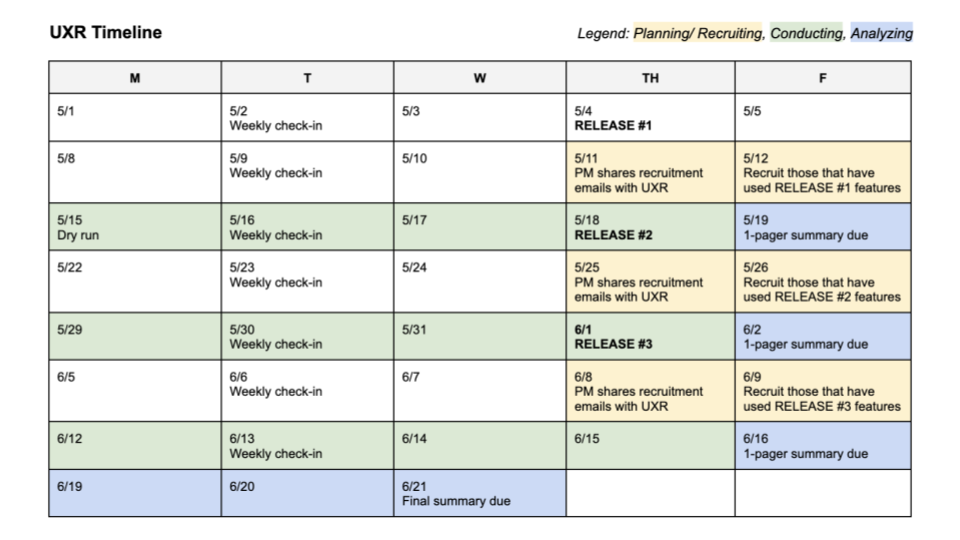

As features were released to the experiment group on a biweekly basis, I designed a testing schedule to reflect this cadence and to work in lock-step with my product counterparts.

Product Managers and Data Analyst reviewed data (from Heap) to identify areas for inquiry in the interviews.

I conducted interviews based on the feature release schedule, with team members observing.

I led weekly meetings to review quant and qual findings, adapt interview questions as needed, and address any critical action items.

A teacher explaining the value of the new ‘lock lexile‘ feature to her workflow.

My UXR timeline, which weaves in user testing and check-ins over the course of two months.

[ Deliverables & Impact ]

Bi-Weekly 1-Pager

This brief summary, released bi-weekly as new features were prototyped and tested, kept stakeholders engaged and agile throughout the process. It included an average rating usability and usefulness for each feature. It also highlighted what was (and wasn’t) working well with actionable recommendations.

Through these regular updates, my team was able to prioritize what features to refine, and also fix some critical bugs before deployment.

Final Summary

After all features had been tested, I produced a final summary outlining the takeaways from each feature, as well as short- and long-term recommendations.

The learnings from this study provided the validation needed to roll out critical features for Back-to-School. As hypothesized, teachers were making assignments on the platform more than before given their new set of features.